Quantum computing leverages qubits to access energy scales, coherence times, and gate fidelities that bound practical architectures. Each qubit contributes probabilistic amplification, error budgets, and control requirements that shape hardware and software stacks. Quantum principles enable interference and entanglement to surpass certain classical limits, but the advantage is conditional on mature error mitigation and benchmarking. The trajectory hinges on scalable modular designs and rigorous validation, leaving open questions about real-world performance as one advances.

What Is Quantum Computing Really Each Qubit Brings?

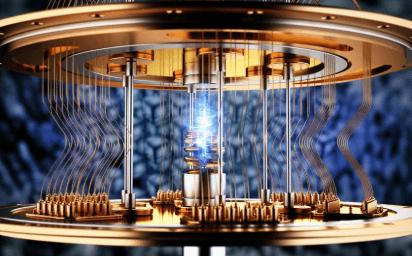

Quantum computing leverages qubits as the fundamental information units, each capable of existing in a superposition of |0⟩ and |1⟩ states with amplitudes that encode probabilities. In this framework, qubit capacitance influences energy scales and control, while coherence time bounds operational sequences.

Experimental metrics quantify gate errors, fidelity, and decoherence, guiding scalable architectures toward reliable, programmable quantum advantage.

How Quantum Principles Break Classical Barriers

How do quantum principles surpass classical limits in computation and information processing? Experiments quantify speedups via probabilistic amplitudes, interference, and superposition, revealing computational regimes beyond classical bounds.

Quantum entanglement enables correlated outcomes across subsystems, while precise decoherence management preserves coherence and fidelity.

Systematic error budgets, tomography, and benchmark measurements validate performance gains without overclaiming, keeping interpretation rigorous and dreams of freedom tethered to measurable evidence.

Real-World Paths to Quantum Advantage

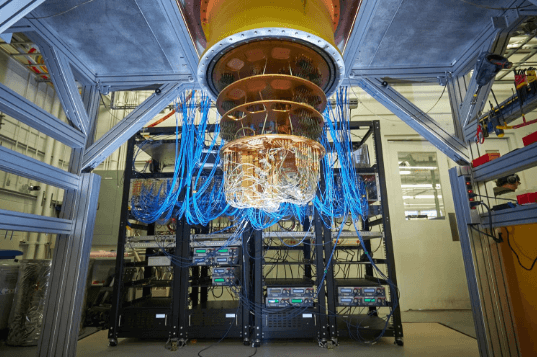

Real-world quantum advantage emerges through a measured combination of hardware maturity, problem structuring, and rigorous benchmarking. Empirical results quantify error budgets, coherence times, and gate fidelities, framing objective thresholds for practical tasks. Quantum error management, including mitigation and correction, shifts feasible workloads. Hardware fabrication advances enable reproducible devices, enabling standardized testing protocols and cross-lab comparability for scalable, transparent assessments of performance gains.

Current Roadblocks and What It Takes to Scale Quantum

Despite rapid progress, scaling quantum systems remains constrained by intertwined hardware, software, and architectural challenges. The discussion quantifies qubit quality, gate fidelity, and error budgets, revealing an error prone subtopic where fault-tolerant thresholds are tested across architectures. Experimental data highlight cooling, isolation, and control bandwidth limits. Speculative feasibility hinges on modular designs, resource estimates, and scalable error correction under realistic noise profiles.

See also: Quantum Hardware and Its Potential

Frequently Asked Questions

How Close Are Quantum Computers to Mainstream Everyday Use?

The assessment: mainstream integration remains years away, contingent on hardware milestones, error correction, and scalable architectures; researchers report progress with qubit counts, fidelity gains, and modules. Yet practical, widespread use awaits robust, cost-effective, standardized ecosystems.

What Will Quantum Computing Cost for Businesses and Consumers?

Cost structure for quantum computing remains evolving; consumer pricing will gradually trend downward as hardware and software mature. The analysis indicates cautious optimism: businesses bear higher upfront R&D, while consumer pricing stabilizes through economies of scale and modular services.

Can Quantum Devices Run Existing Software Without Changes?

Quantum devices cannot run existing software unchanged; discrete qubits and architectural constraints require retooling, with error mitigation and specialized compilers shaping performance expectations across applications for freedom-seeking users.

How Will Quantum Tech Impact Privacy and Security Today?

A hypothetical quantum-enabled breach illustrates how privacy threats may escalate; today, quantum tech could undermine classical cryptography, while cryptographic resilience depends on post-quantum standards. Quantitative risk estimates show rapid threat growth, demanding proactive, data-centric protections.

Are Quantum Computers Accessible to Students and Hobbyists?

Accessible学生 systems exist but typically target institutions; hobbyistfriendly options are limited yet growing with open-source platforms, affordable kits, and cloud access. Quantitative metrics show barrier reduction varies by cost, hardware availability, and instructional support for independent experimentation.

Conclusion

Quantum computing advances hinge on balancing qubit quality, control precision, and error mitigation. A representative metric illustrates progress: average gate fidelity rising from 99.0% to 99.9% over a five-year span tightens error budgets by a factor of ten, accelerating fault-tolerant prospects. Real-world demonstrations—modular architectures, standardized benchmarks, and rigorous validation—underscore that scalable quantum advantage rests on verifiable performance gains, not novelty alone. The path forward demands quantitative benchmarking, reproducible experiments, and disciplined hardware-software co-design.